What Are Acceptable AI Detection Scores in Academic Writing?

Published: 26 Jan 2026

When using AI in writing, it’s important to understand what AI detection scores are acceptable. These scores show how much of your work is generated by AI. There’s no one-size-fits-all rule, but most academic and professional environments accept scores under 20%. If the score is higher, it may raise questions about the originality of your work.

A lower score usually means your content is mainly human-written, while a higher score suggests more AI involvement. It’s key to blend AI with your own ideas to keep your work authentic. Knowing these guidelines can help you use AI tools while staying true to your original voice.If you’re interested in exploring more related tools, check out my comprehensive guide on Winston AI.

What Does an AI Detection Score Really Mean?

When you check your writing with an AI detector, it gives you a percentage score. This score shows how likely it is that parts of your text look like they were written by AI. The tool doesn’t count words one by one or give a definite answer. Instead, it looks at patterns in your writing, such as sentence structure, word choice, and how predictable the text is. Think of the score as a guess, not a fact about how the text was written.

For example:

- If a tool says 70% AI, it means the detector believes the text looks more like AI writing than human writing. 70% AI doesn’t mean 70% of the words are written by a machine; it’s just a guess based on patterns.

- If the score is 0% AI, it doesn’t mean there’s no AI involved; it just means the detector didn’t find obvious AI patterns.

- AI detectors can make mistakes, sometimes they might think human writing is AI or miss AI content altogether.

These scores are helpful guides, but they should never be taken as absolute proof of AI or human authorship.

What Is a Good AI Detection Score?

A good AI detection score depends on where you are using the writing. There isn’t a single number that works everywhere because different places and tools measure AI scores differently. In many academic settings, a score below 20% is usually acceptable and unlikely to raise concerns about AI use.

Here’s how different AI detection scores are often understood:

- 0%–5%: Mostly written by humans, with little to no AI influence.

- 5%–20%: Accepted in many academic and professional settings.

- 20%–30%: May need a review, especially for academic work.

- 30%+: Likely to be influenced by AI and might require further checking.

This breakdown helps you understand what score is generally seen as “good” or acceptable for your needs.

Why AI Scores Are Not Always Perfect

AI detection tools are helpful, but they don’t always give completely accurate results. These tools are based on patterns and probabilities, so they can sometimes misjudge the text they analyze. That means a score alone shouldn’t be seen as the final truth about whether content was written by AI or a human.

Here are the main reasons why AI detection scores aren’t always perfect:

- False positives happen: This is when human‑written text gets flagged as AI‑generated by mistake. Some tools can wrongly label simple or repetitive writing as AI content.

- False negatives occur: A detector might miss AI‑written text and mark it as human‑written. This can happen when the AI output is heavily edited or paraphrased.

- Accuracy varies by tool: Different detectors have different success rates — many perform between about 65% and 90% accuracy, depending on the text and tool.

- Bias and writing style issues: Some tools may misjudge work from non‑native English writers or certain styles because they were trained mostly on other kinds of text.

- Short or unconventional text is harder to judge: Tools may not work well with things like bullet points, poetry, or code, leading to incorrect results.

Because of these limitations, educators and writers are encouraged to use AI detection scores as guides, not absolute proof, and always consider context alongside human judgment.

Why Accuracy and Confidence Matter More Than the Score

When it comes to AI detection scores, the raw number isn’t everything. AI detection tools provide an estimate of how likely AI is involved, but they’re not perfect. They don’t give a single, final answer. What really matters is the confidence and accuracy behind the score, not just the number.

Detection tools give likelihoods, not facts. For example, if a text shows 30% or 60% AI detection, it’s just a guess, not a certainty about who wrote it. Different tools can give different scores for the same piece of text.

High confidence at the extremes is more telling. Scores that are very low (close to 0%) or very high (close to 100%) suggest the detector is fairly certain it’s human or AI writing, respectively. But mid-range scores often just show uncertainty.

False positives and negatives happen too. Sometimes, AI detectors wrongly mark human-written text as AI or miss AI content altogether. This is why you can’t trust a score by itself without considering the context.

The accuracy of a detection tool depends on many things, like writing style, topic, and text length. No tool is perfect for all types of writing.

In the end, human review is still key. A high detection score usually flags the text for a closer look, but it’s not proof of anything on its own. A person reviewing the work can interpret the score better, considering the context and confidence levels.

Why a 50/50 AI Assessment Isn’t Helpful

Sometimes, AI detectors give a score that’s right in the middle, like 50/50. But this doesn’t really tell you much. These tools work based on probabilities, and a middle score often means the detector isn’t sure, not that your text is half AI and half human.

- AI detection is about probabilities, not certainties: Detection tools predict how likely your text is to be AI-generated, based on patterns. A 50% score is just an estimate, not proof that your work is AI-written.

- Middle scores show uncertainty: When the score is in the middle, it usually means the tool can’t clearly tell the difference between human and AI writing.

- False positives and negatives happen: Detectors sometimes misidentify human text as AI or miss AI text completely, so an unclear score isn’t reliable.

- Your writing style might confuse the detector: Even well-written, natural text with a clear structure or formal tone could look like AI to the tool, causing an unclear result.

- Don’t worry about a 50/50 result: A score like that isn’t a final judgment. It’s a sign that you should look at the context, review your writing style, and maybe use more than one tool to get a clearer picture.

How to Use AI Percentages Without Losing Your Mind

AI detection scores show how likely text is to be generated by AI, but these scores aren’t perfect and shouldn’t be seen as final judgments. Since different tools may have different levels of accuracy and interpretation, it’s important to use these percentages wisely.

Practical Tips:

- Use multiple detectors: Different tools check for different things and may give different results. Don’t rely on just one.

- Focus on the range: Look at the overall range of the score instead of focusing on an exact number. Very low or very high scores are clearer signals than scores in the middle, which can be uncertain.

- Consider the context: A higher score doesn’t always mean AI was used. Human writing in certain styles, like formal or structured writing, can score higher too.

- Apply your own judgment: Read the text yourself. Does it feel personal, detailed, and original? Trust your own sense of the writing.

- Add your own voice: Including your own insights or examples can help. Detectors often flag writing that sounds too predictable or generic, so make it unique.

- Remember the scores are just probabilities: They show the likelihood that AI is involved, not a final confirmation.

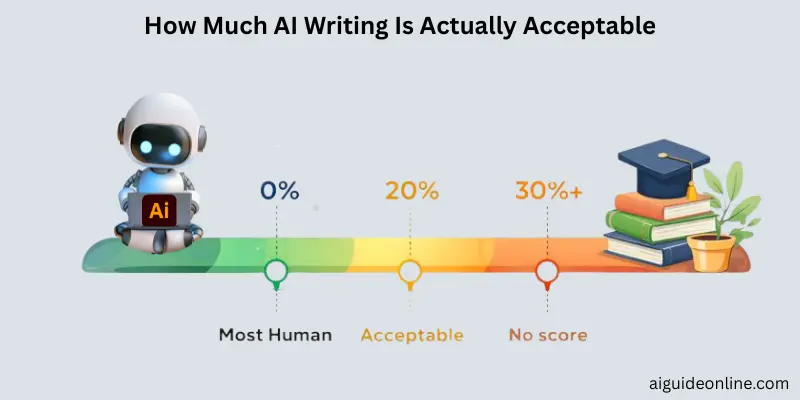

How Much AI Writing Is Actually Acceptable?

There isn’t a single number that fits every situation because what’s considered an acceptable AI writing percentage depends on where and how you’re using AI. In many academic and professional settings, lower percentages of AI involvement are preferred to keep the work feeling genuine and original.

Most educators and institutions think that keeping AI content under 20–30% is less likely to raise concerns about originality.

If AI is used for small tasks like grammar checks, summaries, or generating ideas, it’s usually more acceptable than if most of the text is AI-generated.

Turnitin and Acceptable AI Levels

Turnitin’s AI detection feature estimates how much of a submission may have been written or influenced by AI. However, it doesn’t treat all scores the same. If the AI detection score is below 20%, Turnitin shows an asterisk (*) instead of a number. This is because scores in this range are considered unreliable and may be false positives.

When Turnitin shows a clear percentage of AI-detected text, usually 20% or higher, it means the system is more confident that AI-like language patterns are present. Even so, a higher score doesn’t automatically suggest misconduct. How the score is interpreted depends on your school’s policies and the specific situation.

GPTZero, Copyleaks, and Other AI Detection Tools

AI detection tools like GPTZero and Copyleaks help people check if a piece of writing might have been created by artificial intelligence instead of a human. These tools work in different ways. GPTZero looks for patterns in the text that are common in AI writing, while Copyleaks focuses on being very accurate and can even spot AI writing when it is mixed with human-written content.

Since no tool is perfect, many people use more than one to get a better idea of how much AI might be involved in a text. Studies show that Copyleaks is often one of the most accurate tools, but GPTZero and others also have strengths depending on the type of writing and how it’s been edited.

How to Reduce AI Percentage in Your Content

When AI detection tools flag a high percentage in your text, it usually means your writing sounds too much like a machine, not necessarily that it’s bad. To lower your AI score, make your writing feel more natural and human. Here are some practical tips to help with that:

- Start with your own ideas: Write your main points first, and use AI to help with brainstorming or editing, not for writing the whole piece. Detectors often flag text that seems too smooth and predictable, which can happen if the whole draft is AI-generated.

- Add personal experiences: AI tends to write generic content. By including personal stories or real examples, your writing will stand out as unique and human.

- Vary your sentence structure: Mix short, medium, and long sentences to give your writing a more natural flow. If all your sentences are the same length, it can look robotic to detectors.

- Fix robotic phrases: Edit overused AI phrases and repetitive patterns. Change up sentences to avoid sounding too predictable.

- Use simple language and active voice: Don’t overcomplicate your sentences. Simple, varied language feels more human and less like AI.

- Edit with multiple tools: After rewriting, run your text through an AI detector again. Focus on areas with high AI scores and revise them.

- Inject your own style: Your unique tone and word choices make a big difference. AI detectors look for writing that doesn’t sound like anyone else, so let your natural voice shine through.

Real‑Life Example: Interpreting a Detection Score

AI detection tools give a percentage that estimates how much of your writing could be generated by AI. But that score isn’t absolute—it’s just a guideline that should be understood with care and context.

For example, a tool like Turnitin shows a percentage of text it thinks might be AI‑generated, but this is only an estimate, not proof of AI use.

If the score is low (below 20%), it’s often marked with an asterisk. This means the result is less reliable and could just reflect normal writing patterns, not AI content.

Different tools can give different scores for the same text because each uses its own algorithms and training data.

A high score doesn’t automatically mean misconduct. Educators typically look at the context, drafts, and the development of the writing when interpreting these results.

It’s smart to treat the score as one signal among others, not as a final judgment. If you want to lower the AI percentage, consider rewriting parts or adding personal insights.

Conclusion

We’ve talked a lot about AI detection scores and how they aren’t set in stone. Different tools can give different results for the same text. These scores are just guides, not final decisions. The main thing is to focus on the quality of your writing, avoid relying too much on any one tool, and always keep the context in mind.

My advice? Don’t worry if your score isn’t perfect. If you’re unsure, just rewrite, add your personal touch, and treat AI detection as a helpful tool to improve your writing, not as a judge of your effort.Keep learning, stay tuned for more tips, and remember, you’ve got this!

FAQs

A 40% AI detection score is higher than you’d want, but it doesn’t mean your work is entirely AI-generated. It just suggests that a significant portion of the content might have AI influence. Educators or reviewers may look closer at your work to check how much of it is original. If possible, try rewriting parts of it to lower the percentage.

Educators use AI detection scores as one tool to evaluate originality. A high score could raise questions, but they’ll also consider your writing process, drafts, and the overall quality of your work. If you’re unsure, explaining how you created your content is always a good idea.

The 80/20 rule in AI writing means using AI for 80% of minor tasks, like brainstorming or drafting, and 20% for your own unique input. This approach helps you balance efficiency with originality, keeping your work personal and authentic.

Yes, you can use AI to write SEO content, but make sure to add your personal touch. AI can help with structure and keyword optimization, but it’s important to include original ideas and unique phrasing to make your content stand out and rank well.

A 10% AI detection score is usually acceptable in many settings. It indicates that there’s a small amount of AI influence, which is fine as long as the content remains mostly original. However, the context matters—academic writing might have different expectations compared to business content.

If your essay has 30% AI detected, it could raise concerns, especially in academic settings. You might be asked to explain or revise your work. It’s a good idea to edit your content and reduce the AI influence to make sure more of your original ideas are present.

A good AI detection score is generally considered to be below 20%. This suggests that most of your content is original, with minimal AI influence. Keeping the score low is always best to avoid any issues with originality.

A 14% AI detection score isn’t bad. It’s considered low and acceptable in most situations. It shows that only a small part of your content was influenced by AI, and it shouldn’t be a cause for concern if the work is still original.

No, a 7% AI detection score is very low and perfectly fine. It means most of your work is original, with just a tiny amount of AI influence. You’re in a great spot with that percentage!

- Be Respectful

- Stay Relevant

- Stay Positive

- True Feedback

- Encourage Discussion

- Avoid Spamming

- No Fake News

- Don't Copy-Paste

- No Personal Attacks

- Be Respectful

- Stay Relevant

- Stay Positive

- True Feedback

- Encourage Discussion

- Avoid Spamming

- No Fake News

- Don't Copy-Paste

- No Personal Attacks